Explosion of data in the energy industry

Big data is everywhere in today’s energy industry and it’s getting bigger. To most people, energy data is about consumption metrics, however data is changing the market in all parts of the value chain.

Generators use market data to optimise their dispatch, ramping flexible assets up and down in response to real and near real time supply and demand forecasts. To do this they combine data from their own assets relating to plant operational performance, availability and technical parameters (which can be variable for example with ambient temperature) with information from the market on availability of other assets and prices.

Energy traders can balance and optimise their portfolios internationally, trading in the wholesale markets and on interconnectors. The more sophisticated players have invested in fast and flexible IT systems allowing them to exploit short-lived arbitrages wherever they arise.

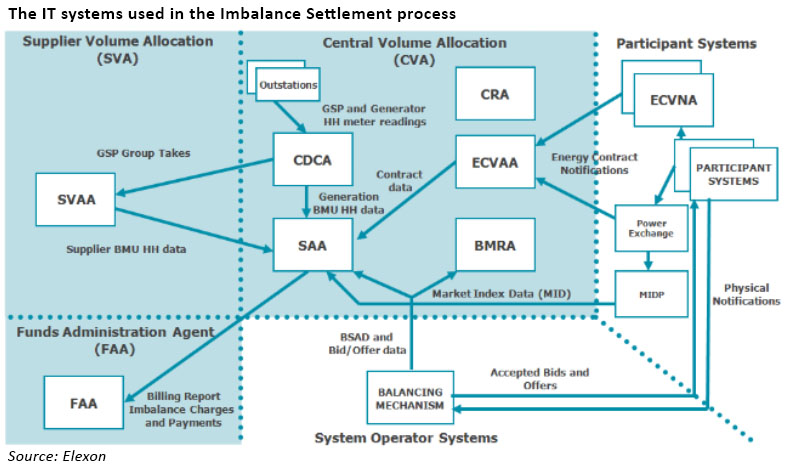

Significant amounts of data are processed by Elexon in the electricity settlements process. The complexity of these arrangements means that small generators entering the market almost always need to partner with a large supplier in order to access the market, and participate in embedded benefits sharing. The multiple revenue streams available under the different industry codes for distribution or transmission connected assets makes settlements extremely complex. The desire for new assets such as storage to “stack” revenues across multiple channels provides a further source of complexity.

Data is essential for grid management. TSOs rely on demand and generation forecasts in order to ensure the grid is balanced at all times and frequency is stabilised. Historically, the system was simpler and much of the data was based on models and forecasts due to the limited deployment of half-hourly metering. As the electricity system becomes more decentralised and more complex, and as technology develops to increase opportunities for load management, the associated data is multiplying.

Much is made of the potential for digitising energy provision for consumers. Smart meters are expected to provide both users and suppliers with granular consumption data, and with the development of the IoT and a wide range of networked appliances expected to be available in the future, huge data transfers are also expected. In the I&C sector, the rise of so-called “lick and stick” transmitters which are cheap and easily installed provide consumption data from very wide range of industrial appliances as a first step to demand optimisation.

The explosion of data in the energy markets presents a range of challenges, or opportunities, depending on your perspective around data handling, data security and standardisation.

Data handling: collecting, storing and analysing big energy data

The term “Big Data” describes collections of data or documents so large and complex that they are difficult to process using on-hand database management tools or traditional data processing applications. “Big” can describe both the volume and diversity of the data, and the speed with which it is generated. Data analytics and data mining techniques have been developed to analyse large amounts of data in order to find patterns that may not otherwise be obvious. This can provide significant insights into a range of relevant areas from technical generation and grid operations to organisational and consumer behaviours.

The huge volumes of data now available in the energy sector present major data handling challenges. Historically utilities have relied on on-site software and data centres, however the growth in data volumes being handled and the associated costs is leading utilities to consider other solutions. As cloud-based services have become more secure, companies are increasingly seeing the value in managing their data needs off-site. Around this cloud-based data analytics services are emerging in addition to traditional cloud-based storage solutions. For example, GE recently announced that US utility Exelon will use its cloud-based Predix platform to analyse data from its entire generation fleet, seeking a 20% reduction in operation and maintenance costs.

The huge volumes of data now available in the energy sector present major data handling challenges. Historically utilities have relied on on-site software and data centres, however the growth in data volumes being handled and the associated costs is leading utilities to consider other solutions. As cloud-based services have become more secure, companies are increasingly seeing the value in managing their data needs off-site. Around this cloud-based data analytics services are emerging in addition to traditional cloud-based storage solutions. For example, GE recently announced that US utility Exelon will use its cloud-based Predix platform to analyse data from its entire generation fleet, seeking a 20% reduction in operation and maintenance costs.

The Economist Intelligence Unit’s 2013 survey of 250 senior executives in the utilities sector across Western Europe found that just 36 % of firms had a unified data management strategy while 64% felt that their organisation had data silos, in which data was not shared across the organisation. A significant proportion (43 %) estimated that less than half of the data their company collected was used by the business.

“Data is one of the most strategic assets a utility can own, and it is unfortunate that utilities are waking up to the value of enterprise data at the same time their data is growing at an exponential rate.”

– Stuart Ravens, principal research analyst contributing to Navigant Research’s Utility Transformation team, writing in Utility Dive

Machine learning is increasingly seen as an important tool in energy markets, having a wide range of applications including identification of hidden usage patterns, optimising thermostat controls and integration of electric vehicle charging points into an electricity grid.

According to the Financial Times, DeepMind Technologies, an artificial intelligence developer owned by Google, is in discussions with National Grid to use artificial intelligence to help balance energy supply and demand in Britain. DeepMind’s algorithms have already been responsible for a 15% reduction in energy consumption in Google’s datacenters, through more accurate load prediction which led to more efficient use of cooling systems. These same algorithms could be used to more accurately predict demand patterns and help balance the national energy system more efficiently.

In recent years we have seen the consequences of poor data management as major utilities have experiences significant billing issues as they upgraded their IT systems. A report by energy consultants Inenco in March 2017 found that as many as one in five UK businesses may have been in receipt of incorrect energy bills due to a combination of human error and complex data flows. Getting it wrong can have significant negative consequences for utilities and consumers alike.

“In the past, often information technologies could be added without fundamentally changing the business. Smart Grid Technologies, coupled with Big Data Analytics, are going to be transformative to utilities. They will disrupt the status quo of operations, organisational management, customer relations, and regulatory interactions. This will require that utility managers embrace data in new ways. The utility industry will need to go from being reactive to being proactive in using data.”

– Big Data Issues and Opportunities for Electric Utilities, Beth-Anne Schuelke-Leech, Betsy Barry, Matteo Muratori, B.J. Yurkovich

Big data bring big data security headaches

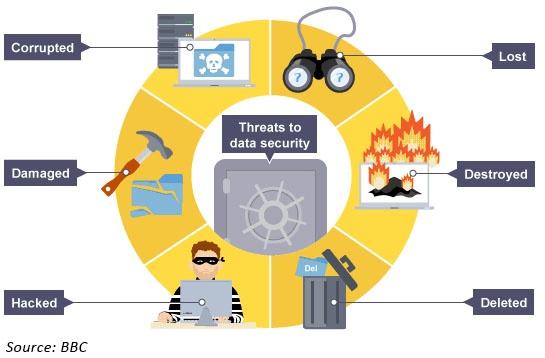

January 2016 saw what is believed to be the first successful attack on a power grid where hackers reportedly switched off substations in the Ukraine remotely, and disabled IT components. A denial of service attack was also made on a call centre. More than 200,000 people were left without power for over eight hours. The U.S. Energy Department said in 2017 report that the US electricity system “faces imminent danger” from cyber-attacks, which are growing more frequent and sophisticated.

Most transmission and distribution systems in the industry are implemented using Supervisory Control and Data Acquisition (SCADA) systems. According to Schuelke-Leech et al, quoted above, legacy SCADA systems are typically maintained rather than being replaced for cost reasons. These legacy SCADA systems are generally simple and do not have sufficient security features to protect against malicious attacks that originate on newer technology networks, which is concerning, as they are often the last line of defense and have complete control over power system operation and management.

Cyber extortion is a particular concern – ransomware is expected to become a far greater threat in the future than it has been to date, with energy networks being particularly sensitive targets. The development of ‘smart cities’ which use data to deliver enhanced services within connected and intelligent urban areas as well as the connected home concepts made available by the Internet of Things will leave corporations, municipalities and individuals all vulnerable to criminal attacks.

Another concern is the rise of the so-called “brick attack” where hackers simply destroy all the information by using malware to infect the computers that store information, rendering them completely useless. They can disable firewalls and block antivirus programs by altering settings. In 2012, the world’s largest oil company Saudi Aramco, was hit by a brick attack, destroying some 3,000 computers.

“Cyber criminals are harnessing this new digital reality, in which they can reach out across the globe, anonymously, risk-free. They attack systems, data and networks virtually without intervention and traditional defences are no longer adequate.”

– Troels Oerting, Group chief information security officer, Barclays quoted in the Institute of Director’s Cyber Security report, March 2017.

Hand in hand with the issue of security goes the issue of privacy. As utilities and other organisations are able to collect vast amounts of personal data, questions around anonymisation and usage arise alongside fundamental questions of security. Recently, there have been reports that intelligence agencies were using smart TVs and other devices to spy on individuals, and that technology companies were using phone microphones to capture personal data used for targeted advertising. As more devices become connected and capable of supplying personal data, these issues will multiply – standards will inevitably be set by governments and regulators, and compliance expected across the board.

Without standardisation, big data risks being a big mess

Standardisation is essential in any mass-deployed system. Chaos would reign if every electrical device had a different plug, or if house-builders had the freedom to use any type of socket. In fact it took about 60 years for the first version of the modern plug and socket standard to emerge – before this a wide range of 2 or 3 pin sockets were in use, with different current ratings, many of which were governed by their own standards.

It wasn’t until the Electrical Installations Committee which sat during the Second World War examined all aspects of electrical installations in buildings that modern standards emerged. The Committee made a series of recommendations out of which emerged BS 1363 which specified the standard 3-pin 13 amp plugs in use today, as well as the use of ring mains.

It wasn’t until the Electrical Installations Committee which sat during the Second World War examined all aspects of electrical installations in buildings that modern standards emerged. The Committee made a series of recommendations out of which emerged BS 1363 which specified the standard 3-pin 13 amp plugs in use today, as well as the use of ring mains.

A similar degree of standardisation is needed for the new generations of smart appliances, which need not only standard power connections, but also standard communication protocols.

Currently there are half a dozen communication protocols for the short range communications required for smart homes. Even where systems use the same general family of standards, they may not be interoperable as suppliers use varying degrees of customisation in their deployments.

This lack of standardisation inhibits product innovation by providers and take-up by consumers who all want to avoid stranded assets or investments. Greater co-ordination between industry and government is needed to drive standardisation and enable smart home innovation to enter the mainstream. Although premature standardisation risks stifling innovation, it is to be hoped that this time it will take fewer than 60 years!

Looking forwards

When people speak about technological developments that have the potential to revolutionise markets, it’s not long before someone mentions blockchain. In decentralised energy markets, blockchain could facilitate secure peer to peer energy trading. According to this report by pwc, blockchain has potential beyond transaction execution and could also provide the basis for metering, billing and clearing processes.

“Blockchain technology has the potential to radically change energy as we know it, by starting with individual sectors first but ultimately transforming the entire energy market.”

Whatever new technologies may bring, it’s clear that big data in energy is here to stay and is already changing the shape of the markets. This presents challenges, but also opportunities – the smart money will be on those companies that understand how to harness this data to remove inefficiencies and provide increasingly personalised energy services.

Leave A Comment