Ofgem has now published National Grid’s technical analysis of the 9 August blackout. Much of the meat is contained in the appendices. The report does not answer many of the questions that have been asked in relation to the incident, and throws up a number of issues, that while may be important in certain contexts such as the operation of the railway network, are not really relevant to the questions that are pertinent from an electricity system perspective. I posed some questions in my first post on this subject, but I think these can now be updated as follows:

- It is clear that Hornea did not meet its Grid Code obligations, and that its compliance testing was not complete at the time of the incident. Changes have since been made to its protection systems to make them compliant. Why was the plant allowed to operate before compliance testing was complete and how can we be certain the changes to its systems will work as desired?

- Why did the Little Barford steam turbine fail to ride through the fault, and why did the steam bypass systems not work meaning the plant was also in breach of its Grid Code obligations. Since its compliance testing was carried out in 2013, was the testing adequate to determine fault ride through capabilities, or has there been a subsequent change that reduced the plant’s ability to tolerate system disturbances.

- National Grid says it has no ongoing obligations to monitor Grid Code compliance. Is this satisfactory when an increasingly intermittent and de-centralised system is likely to be less stable, making fault ride through capabilities potentially more important?

- Should National Grid pay more attention to the impact of the potential loss of embedded generation that may be triggered by the same event that causes a single largest loss of infeed event. Did National Grid fail to take account of the lessons from the last major blackout in 2008 where the loss of embedded generation also played a role?

- Why is the report only concerned with National Grid’s communications with the market? The REMIT notices issued by both RWE and Orsted were inaccurate and even misleading, particularly in relation to Hornsea. Should steps be taken to improve the communications all parties make to the market?

What does the technical report tell us: lightning strike and immediate network impact

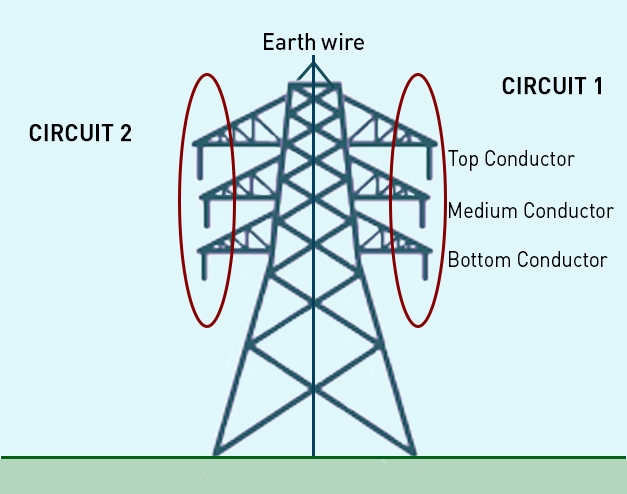

The 9 August blackout was triggered when at 16:53, lightning struck one of the Eaton Socon – Wymondley Main circuits approximately 4.5 km from the Wymondley Main substation end. The Eaton Socon –Wymondely Main circuit is a 400 kV double circuit overhead line running from Eaton Socon substation near St Neots in Cambridgeshire to Wymondley Main substation near Stevenage in Hertfordshire. This circuit runs for just over 35 km and is made up of pylons, carrying two circuits, each with 3 sets of associated conductors, one each side of the tower as depicted in the diagram, and an earth wire at the top of the tower.

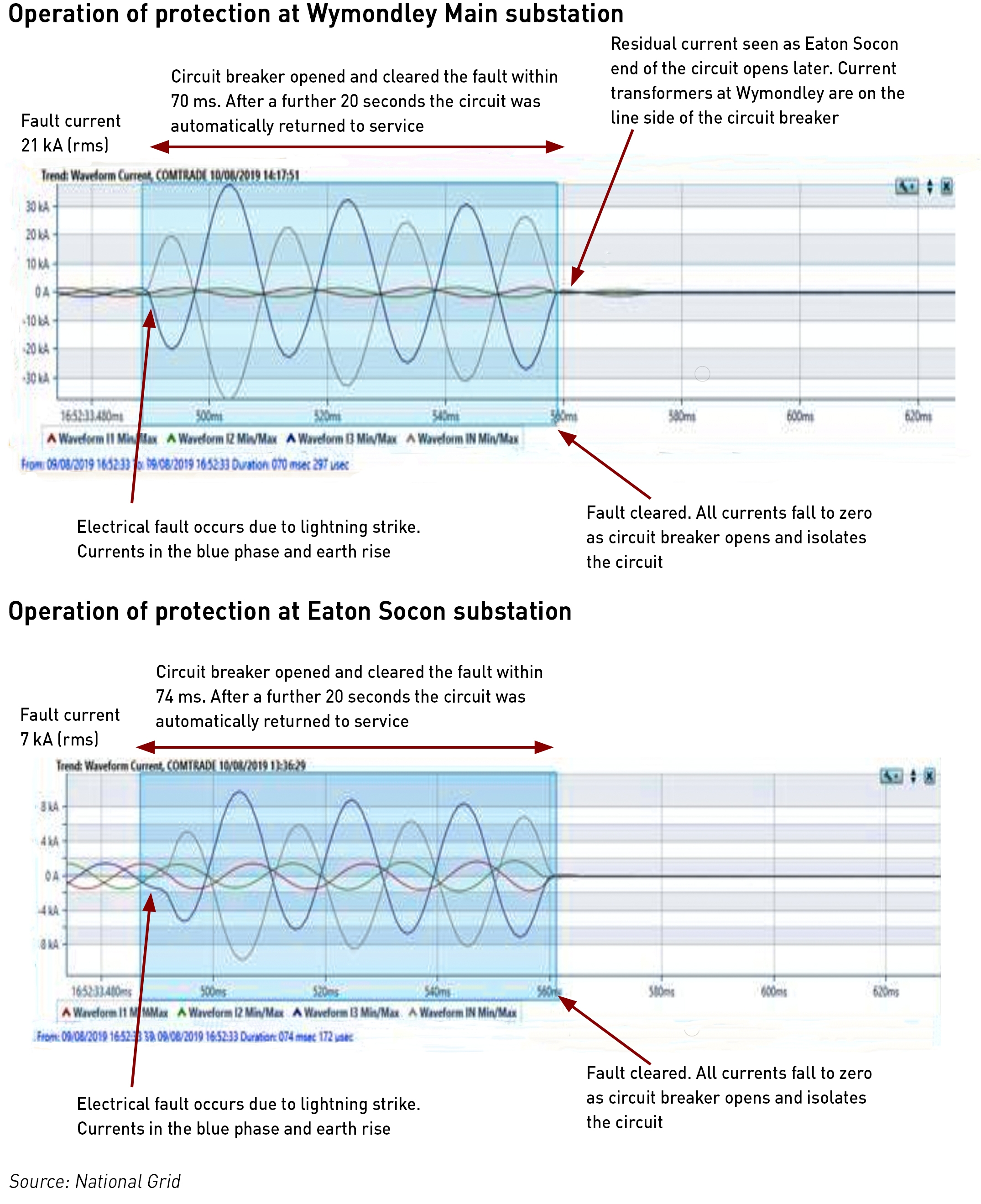

The lightning struck the middle conductor on one side of the pylon causing an electrical fault between the middle (blue phase) conductor and the tower which is earthed to the ground. Both the voltage and current were affected – the voltage was reduced by approximately 50% while currents of 21 kA were recorded at Wymondley Main and 7 kA at Eaton Socon. These effects are within acceptable parameters and are not unusual for this type of lightning strike event.

Each transmission circuit has protection systems which detect faults and clears them by opening circuit breakers at each end of the circuit. The Grid Code requires that the 400 kV primary main circuit breaker protection operates within 80 ms to clear faults. In this event the circuit breaker at Wymondley Main substation opened in 70 ms and that at Eaton Socon opened in 74 ms, clearing the fault from the network.

National Grid is also required to ensure that the system remains within specified limits for voltage, and that current should not exceed the circuit rating following a range of defined fault events, including the prolonged loss of two circuits. This event saw the loss of only one circuit for a duration of only 20 seconds. Transient voltages and currents were seen that were within Grid Code tolerances and steady state system voltages and currents remained within the limits defined within the National Electricity Transmission System Security and Quality of Supply Standards (“NETS SQSS”) following the event.

National Grid reported the voltage variation at Ryhall which is the next substation along from Eaton Socon. A 44% drop in the blue phase voltage was observed, for a duration including recovery of 100 ms.

The voltage variation at Necton Substation in Norfolk was also reported as a proxy for the conditions at Killinghome where Hornsea is connected (the quality monitoring equipment at Killinghome was unavailable due to planned maintenance). A voltage depression of 21% was observed which lasted (including recovery) for 80 ms. The effects were lower than at Ryhall due to the effects of electrical impedance along the line.

National Grid claims that it’s physical grid systems behaved in line with technical requirements, and appears to be borne out by the evidence presented in the report. In this respect, National Grid met its obligations as system operator.

What does the technical report tell us: Hornsea

According to the report provided by Orsted:

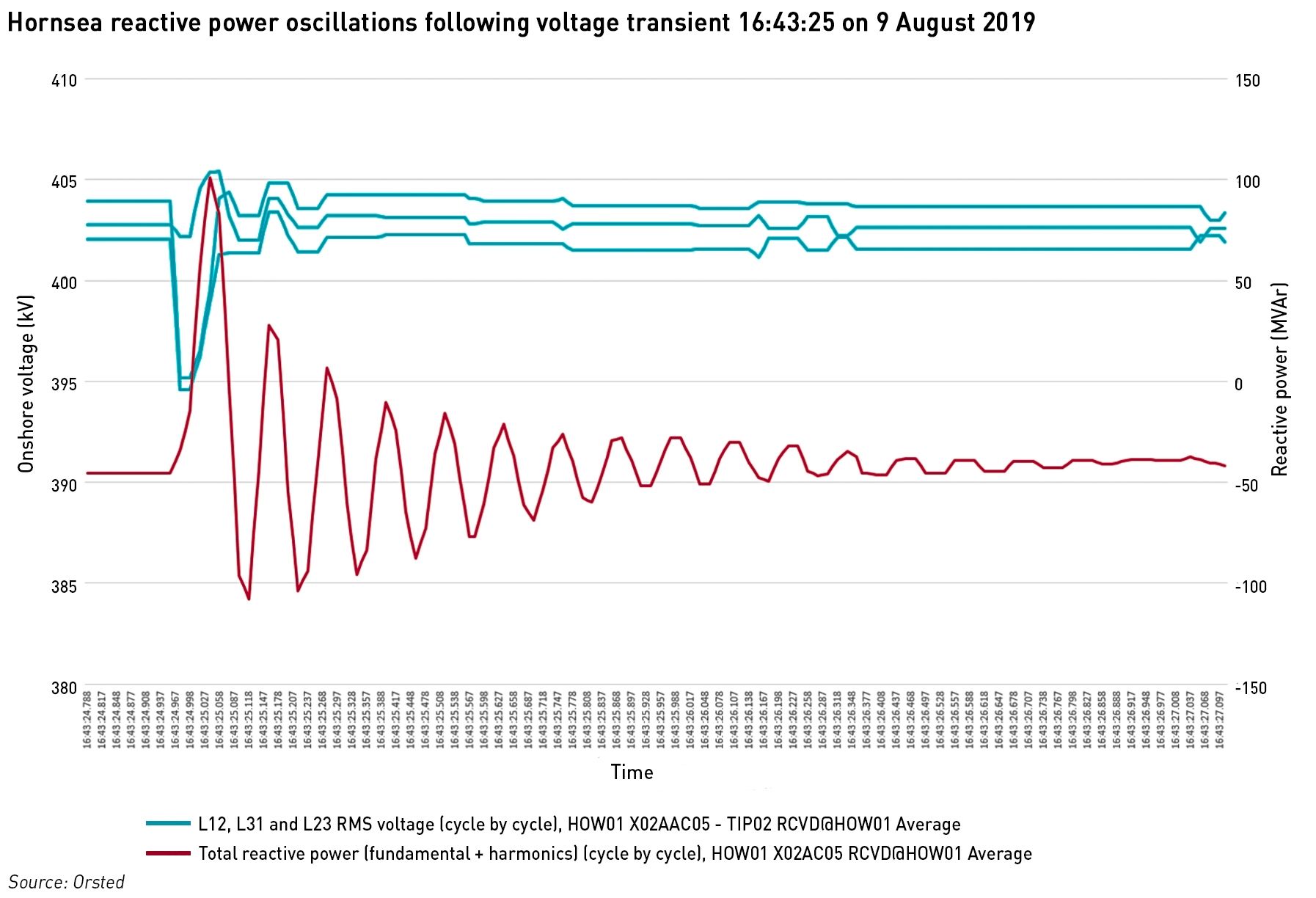

“Initially the offshore wind farm responded as expected by injecting reactive power into the grid thereby restoring the voltage back to nominal. However, in the following few hundred milli-seconds, as the wind farm active power reduced to cope with the voltage dip and the reactive power balance in the wind farm changed, the majority of the wind turbines in the wind farm were disconnected by automatic protection systems. The de-load was caused by an unexpected wind farm control system response, due to an insufficiently damped electrical resonance in the sub-synchronous frequency range, which was triggered by the event.

Since the event, the control system software has been updated to mitigate the observed behaviour of Hornsea One to stabilise the control system to withstand future grid disturbances in line with grid code and connection agreement requirements.”

I would imagine that most people, even working in the energy industry, will struggle to understand what this means. That includes me – I’m not an electrical engineer – but I’m going to have a stab at unravelling what it does mean and what implications it may have more broadly.

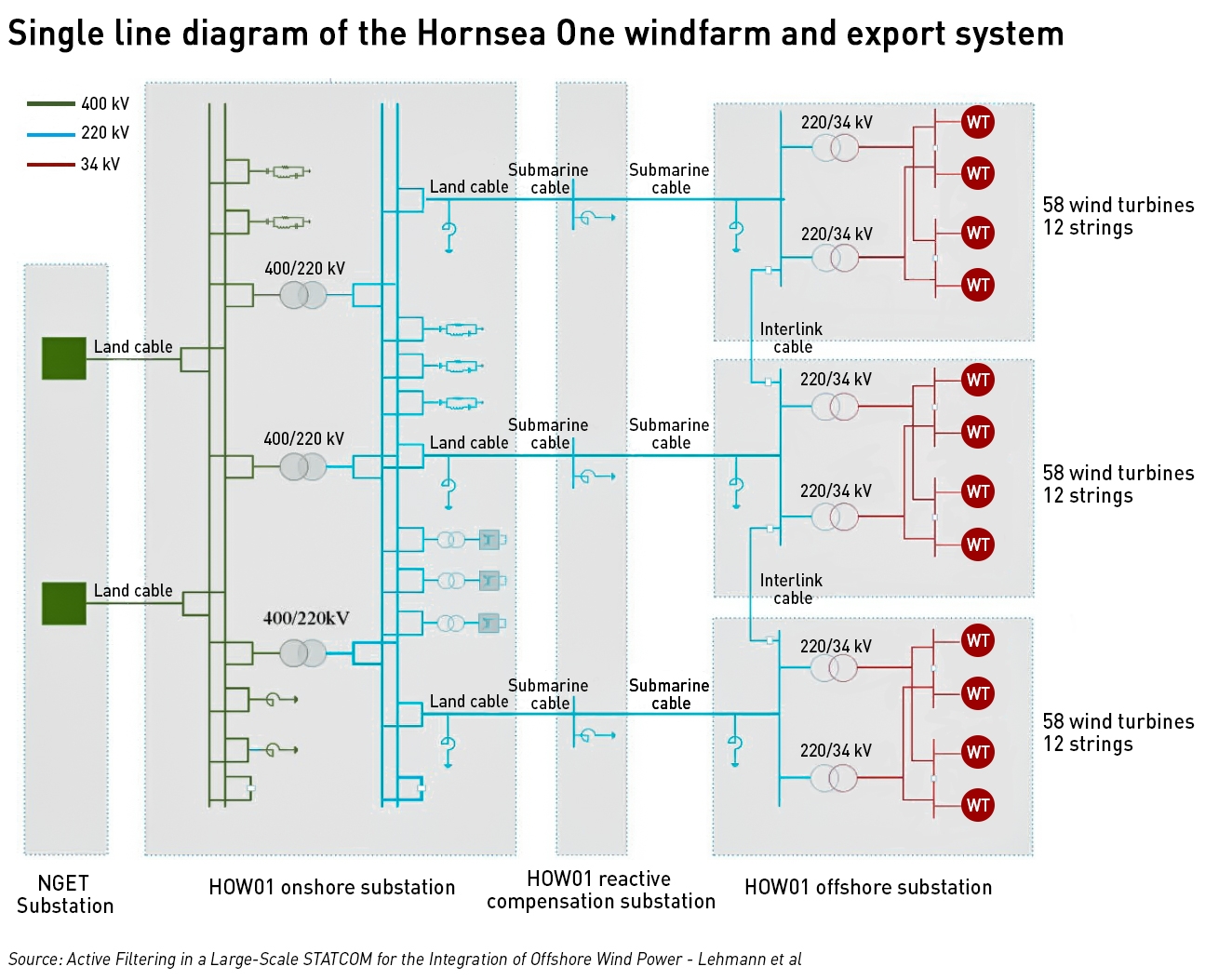

The Hornsea One windfarm consists of 174 7 MW wind turbines grouped into three clusters of 58 units (phases 1A, 1B and 1C of the windfarm) and 12 strings which are connected to three offshore substations. Two grid transformers per substation step the voltage up from 34 kV to 220 kV, and the power is then transmitted to shore via three HVAC cables of approximately 170 – 190 km in length. The reactive power generated by these cables is compensated via three fix shunt reactors at the offshore substations, three fix shunt reactors at the reactive compensation substation, and three variable shunt reactors at the onshore substation.

There are also three harmonic filters connected to the onshore substation 200 kV busbars for damping low-frequency resonance. Three STATCOMS (static compensators) are responsible for voltage and reactive power compliance at the point of connection to the 400 kV network. Three auto transformers as used to step up the voltage to 400 kV prior to connecting to the transmission system at the Killinghome substation. There are also two harmonic filters connected to the 400 kV busbars of the onshore substation to damp high frequency harmonics with two fix shunt reactors.

This paper (Active Filtering in a Large-Scale STATCOM for the Integration of Offshore Wind Power – Lehmann et al) by a group of engineers from Siemens and Dong (Orsted) contains a lot of technical detail on the installation which is probably more detailed than I need for the purposes of this post, but I’m linking it in case anyone is interested to read more.

Role of the reactive compensator

I think the relevant part of the Hornsea infrastructure is the reactive compensator. In order to carry electrical power efficiently from offshore windfarms to demand centres, a technique known as series compensation is used to decrease the reactance of the line, enabling more power to be transferred. Series compensation increases angular and voltage stability in addition to increasing the active power transmission over the transmission line. Series compensation involves connecting a capacitor in series with the transmission line. If a fault or overload occurs in the transmission system, a large current will flow across the capacitor.

One feature of a series-compensated transmission line is that it produces resonance at frequencies lower than the system frequency of 50 Hz. The resonance occurs because the collapsing magnetic field of the inductor generates an electric current in its windings that charges the capacitor, and then the discharging capacitor provides an electric current that builds the magnetic field in the inductor. This process is repeated continually, but is dampened by the presence of resistors (loads) in the circuit.

This is known as sub-synchronous resonance, and will cause mechanical stress in connected turbines due to which high torsional stress which results in the rotor shaft. The phenomenon was first discovered at the Mohave plant in the USA which saw two successive shaft failures in 1970 and 1971 as a result. The formal definition provided by the IEEE is:

“Sub-synchronous Resonance (“SSR”) encompasses the oscillatory attributes of electrical and mechanical variables associated with turbine-generators when coupled to a series capacitor compensated transmission system where the energy interchange is lightly damped, undamped, or even negatively damped and growing. Sub-synchronous oscillation is an electric power system condition where the electric network exchanges significant energy with a turbine-generator at one or more of the natural frequencies of the combined system below the synchronous frequency of the system following a disturbance from equilibrium.”

There was also an incident in Texas in 2009 where an undamped power oscillation occurred due to the interaction between a doubly-fed induction generator (“DFIG”) wind turbine and series compensation capacitor. There was a fault in the transmission system, which was cleared by a circuit breaker operation which led the DFIG wind farms to be in a radial connection with the series compensated transmission line. As a result of this radial connection, increasing oscillations were seen in the voltage and current of the system, which damaged the converters of the wind turbines. The oscillation grew rapidly and quickly reached a peak value of voltage up to 195% of its nominal value. The oscillation was damped when the series capacitor was bypassed after 1.5 s.

Initial research into this incident indicated that these oscillations occurred because of the interaction between converter controllers of DFIG and the series compensation capacitor, in a phenomenon known as sub-synchronous control interaction (“SSCI”). Other instances of SSCI in DFIG wind farms have been observed since.

The effects of SSR can be mitigated by the introduction of static compensators (“STATCOMs”). The Hornsea windfarm has STATCOMS that use voltage source converter (“VSC”) technology which in general converts an input dc voltage into a three-phase output voltage at fundamental frequency, with rapidly controllable amplitude and phase angle. The Hornsea design also calls for them to provide active filtering of the voltage harmonics. These papers describe the technology in more detail.

The technical analysis from Orsted states that the de-load was caused by an unexpected wind farm control system response, due to an insufficiently damped electrical resonance in the sub-synchronous frequency range, which was triggered by the event.

The oscillation can be seen in this chart from the report, which explains that in the event of an overcurrent, the turbines automatically de-load as part of “the industry standard protection system to avoid permanent damage to the generators”. That may be the case – in the event of an overcurrent – but the fact that no other windfarms at a similar or lower electrical distance from the lightning strike were forced to de-load suggests that the protection systems at Hornsea were not working correctly.

The existence of SSRs is not unexpected in a series-compensated transmission system that is experiencing a fault, such as the one caused by the lightning strike. The report goes on the say that while the potential for oscillations had been considered, there had been no reason to suggest that Hornsea One would respond to a fault on the grid in the way that it did in the event. It is also interesting to note that only phases B and C de-loaded, the Hornsea 1A turbines did not disconnect.

Fault ride through obligation

Section CC.6.3.15 of the Grid Code requires generators such as Hornsea One to “remain transiently stable and connected to the System without tripping…for a close-up solid three-phase short circuit fault or any unbalanced short circuit fault on the Onshore Transmission System….for a total fault clearance time of up to 140 ms”.

The short circuit on 9 August was cleared after 74 ms, within the fault ride through capability required in the Grid Code. In this sense, it seems clear that Orsted was in breech of its Grid Code obligations. The report states that “prior to the event, each stage of Hornsea One had been successfully modelled and physically tested in line with all grid code requirements”.

The use of the term “modelled” is interesting here. Hornsea One has the world’s first offshore reactive compensation station, and the Lehmann et al paper referenced above suggests that the active filtering provided by the STATCOM was new. The paper says that:

“Multilevel STATCOMs in HVAC connected offshore WPPs can serve as dynamic and controllable damping device for harmonics (in addition to dynamic voltage support) which can replace or supplement passive filters used to ensure compliance with the grid code requirements and reduce harmonic component stress effectively.”

As the system failed to behave as expected, and as required by the Grid Code, it is tempting to wonder to what extent the performance of the protection systems was modelled rather than physically tested, and whether sufficient testing of either sort was carried out prior to operation.

What does the technical report tell us: Little Barford

The technical report from RWE makes little effort to explain what happened to the plant, beyond what was already known: the steam turbine tripped, and the steam by-pass systems for the gas turbines failed to work causing unsafe steam build-up which tripped off the first gas turbine and caused staff to disconnect the second for safety reasons.

“A comprehensive investigation of hardware, software, fault handling and diagnostic coverage for the conditions, that the ST was subjected to during this rare system disturbance, is ongoing…. Upon initiation of the Steam Turbine Trip the Gas Turbines went into bypass mode of operation, which is the normal response to allow sustained operation. For reasons presently unknown a high-pressure excursion occurred on GT1A resulting in an automated trip. Given the prevailing conditions all systems functioned as expected.GT1B was manually tripped shortly after as a result of excessive steam pressure.”

The question of why these things happened is not answered, although there is a suggestion that the plant tried to switch to back-up battery systems that are designed to insure continued supply to all parts of the plant in the event of a disruption in the grid supply.

RWE’s report is unsatisfactory in a number of respects: it provides no explanation of why the plant behaved as it did, and described the grid fault as a “rare system disturbance”. This is at odds with the National Grid analysis which indicates that not only are lightning strikes on the transmission system very common, but that the grid infrastructure behaved as expected in response and the fault was cleared well within the 140 ms fault ride through obligations generators are required to meet under the Grid Code.

From having initially been more sympathetic in relation to the Little Barford trip, I am significantly less so having read this report. However, I am still inclined to think that the Little Barford trip is a plant-specific issue that has no real implications for the wider power system other than the issue of ensuring Grid Code compliance.

What does the technical report tell us: Compliance Testing

Since both generators appear to have been in breach of their Grid Code obligations, the report contains a section on compliance testing and monitoring:

“Generators are responsible for demonstrating and maintaining compliance with the Grid Code both when connecting to the system initially and on an ongoing basis…. Compliance is demonstrated through a combination of studies, simulations and testing of the generator… The ESO is not responsible for checking compliance on an ongoing basis.”

This last point is significant: there is an inherent assumption that compliance is only affected by changes made by the generator after initial testing, and as long as the generator has not notified National Grid of any changes, it assumes the plant remains compliant. In the best case, this assumes that initial testing is perfect, and anticipates all the scenarios under which the Code obligations might be challenged in real life operation.

In the case of Hornsea, it is evident that not all of the compliance testing had been carried out at the time of the incident, so it might be the case that the outstanding tests would have uncovered the problems with the protection system configuration. In relation to Little Barford, the compliance testing took place in 2013 when the turbines were replaced.

The report does not provide any analysis of this information, nor does it comment on whether the outstanding tests at Hornsea would have uncovered the configuration issues, which is pretty unsatisfactory. As the electricity system becomes more inherently unstable due to the higher penetration of intermittent generation, it may be that the fault ride through obligations become more important. Monitoring this would not be an easy thing to do – deep in Appendix M is a reference to this – testing for fault ride through directly would involve creating a system fault which would be risky and impractical, but this does not mean the issue should be ignored.

Discovering that a generator is not complying with its fault ride through obligations after a major outage is not acceptable – Ofgem should consider whether the current compliance testing regime is adequate and whether National Grid should be responsible for monitoring compliance on an ongoing basis.

What does the technical report tell us: Security and Quality of Supply Standards

The Security and Quality of Supply Standards (“SQSS”) set out the types of event which the system must be able to withstand, and the frequency limits with which the system operator must comply. There are two types of infeed loss set out in the SQSS:

- Normal Infeed Loss: the maxiumim loss the system should be able to manage without the frequency going below 49.5 Hz

- Infrequent Infeed Loss: a higher level of infeed loss dictated by a small number of larger potential losses for which the frequency should not go below 49.2 Hz and should recover to 49.5 Hz within one minute.

The SQSS anticipates that only one of these would happen at any one time, which is known in engineering terms as N-1 ie the network remains secure following one such loss.

On 9 August, the largest single source of input to the system was the IFA interconnector with France which was importing 1,000 MW. Given the distance (and therefore degrees of separation in electrical terms) between the plants that disconnected, National Grid considers that there were 3 separate losses on the day: Hornsea (737 MW), Little Barford (641 MW) and embedded generation tripping due to vector shift protection (150 MW), the sum of which was 1,528 MW, higher than the largest potential single infeed loss. A further 350 MW of embedded generation tripped due to rate of change of frequency (“RoCoF”) protection.

“In securing for the largest loss, the ESO also takes into account the known protection setting historically specified in the Distribution Code for RoCoF of 0.125 Hz/s…. The ESO also considers the impact of vector shift protection on embedded generation.”

The technical report goes on to describe a range of activities carried out by National Grid in relation to calculating the amount of reserve it needs and how it procures this. However, it does not clearly set out how the potential loss of embedded generation was incorporated into the determination of the amount of reserve held on the day.

Appendix M indicates that against a single largest infeed loss of 1,000 MW, National Grid had 1,022 MW of primary and 1,338 MW of secondary reserve. Had the reserve been sized with an expectation of 500 MW of lost embedded generation in addition to the largest infeed loss, the deficit would have been very small, and it is entirely possible that the frequency limit would not have been breached.

Appendix M also addresses whether the lessons from the 2008 blackout were adequately learned. The report for that event, indicates that it was very similar to the events of the 2019 blackout, with the loss of two large generators being compounded by the loss of a significant (371 MW) of embedded generation.

While the recommendations in the 2008 report did not suggest that the potential loss of embedded generation should be added to the single largest infeed loss for the purposes of sizing the amounts of reserve held, this does not mean that National Grid should not have considered doing so. Since the 2008 incident, the electricity system has continued to change, and not only is there more intermittent generation, there is also more de-centralised generation.

The focus of the 2008 report was reducing the sensitivity of embedded generation to transmission system faults – and this useful work is ongoing – however, it isn’t unlikely that a system fault that causes a loss of a large generator could also cause the loss of nearby embedded generation (a large generator could be lost either due to an internal fault with the generator, which should not impact nearby plant, or due to a transmission system disturbance, which may very well also effect local embedded generation). The amount of reserve held should not assume the single largest infeed loss is only the result of an internal plant fault that has no wider associated impact.

In its report, National Grid agrees that the SQSS should be reviewed, and given that the costs of reserve procurement are recovered from consumers, it could argue that holding reserves in excess of the SQSS requirement would be inappropriate. However, this would not prevent it from engaging with Ofgem in revising the condition without needing a major blackout as a trigger.

What does the technical report tell us: system inertia

The answer to this is: almost nothing. There is a brief reference to inertia in Appendix M, with a table of inertia immediately before, during and after the event, but these data are completely out of context. National Grid says that it does not directly measure inertia – inertia is only modelled – and there is no inertia reporting, so market participants have no means of understanding the actual day to day relationship between the generation mix and inertia levels.

Back in May there was a big song and dance about the system running without coal, but over the summer it there has been a steady use of coal with 1-2% of demand being regularly met by coal during the working day. During the summer, it is very unlikely that coal is needed to provide capacity, rather it is probably being used to support grid stability when there are large amounts of renewable generation on the system.

However, if we look at the events of 9 August, it is not clear that inertia played a large role on the day. The difference between the loss of generation and the amount of reserves held was so large that the generation mix probably did not make that much difference. Had all the generation been provided by thermal and nuclear plant, such a large deficit would probably still have caused the frequency tolerances to be breeched.

This is just speculation on my part, and I certainly think inertia data need to be published to allow wider analysis of the impact of the generation mix on inertia and frequency control.

So what does it all mean?

National Grid had three different roles that relate to the 9 August blackout, and its performance in these was variable:

- Fault management: the grid response to the lightning strike was appropriate and all equipment appears to have worked as required, within the timescales required. The fault was cleared within the 80 ms required by the Grid Code;

- Frequency management: National Grid had inadequate reserves to meet the losses on the day. Arguably, it should take more account of the potential impact of embedded generation and hold higher reserves. It has recommended that the SQSS be reviewed as a result of the blackout, but it could really have raised this issue before as this vulnerability has if anything increased since the 2008 blackout;

- Grid Code compliance testing: the testing at Hornsea was incomplete and the testing at Little Barford did not identify the plant’s inability to meet its fault ride through obligations. Although National Grid has no responsibility for ongoing compliance monitoring, there are questions to be answered about whether the initial testing was adequate and timely. There are also questions to be answered in relation to the use of new technologies for managing windfarm output and whether they are being adequately assessed before connection to the grid.

Both Orsted and RWE need to address the specific non-compliance of their plant.

There are also questions for Ofgem as the regulator – the regime under which the system operator has no ongoing obligation to monitor code compliance is defined by the regulator. Has Ofgem been sufficiently rigorous and proactive in considering how the structural changes to the electricity system may require changes in both operations (eg the SQSS and sizing of reserves) and code compliance? The regulator needs to balance the cost of greater system security with the benefits that would result, and it may be that the costs are not justified, but this needs to be explicitly determined and communicated to the market.

There has been a lot of noise surrounding the blackout, with people blaming and defending wind generation, and others proclaiming loudly how storage could save the day. Almost all of these points contain half-truths. The integration of wind is creating challenges in many ways – the reduction in inertia and the impact on intermittency are quite widely discussed, but this event also suggests that there are more complex technical challenges around how the technologies for transporting large volume of power from huge offshore windfarms integrate with the transmission system.

The size and nature of reserves must be reviewed, but this needs to be in the context of the costs as well as the benefits. Battery storage can absolutely provide reserve services – its super-fast response times are very useful to the system – but durations tend to be short and fast frequency response alone cannot support battery investments.

Fundamentally, the causes of the 9 August blackout do not lend themselves to splashy headlines. The questions raised are pretty technical in nature, and the solutions are likely to be similarly technical, but they are important for the secure running of the system as the energy transition continues.

I’m waiting for the E3C interim report which was supposed to have been submitted, if not published, on the 18th. BEIS promised it would be published at their page on the blackout. So far, no sign. It may begin to address some of the other issues you raise that were not covered in the Grid’s report.

Very thoughtful comments, still I have several questions:

1. From the report, may the reason for the unexpected SSR not sufficiently damped be a poor controller parameter setting in wind farm, most possible for STATCOM.?

2. Is overcurrent protection part of the grid code? If so, is it contradictory with the FRT (fault ride through) requirement?

3. Does the term ‘de-load’ mean part of the feeders of wind turbine generators is cut, or just reduce their power output by control system? Why not restore their power supply after the terminal voltage becomes stable, which may effectively prevent the blackout?

I don’t know enough about the detailed engineering to know exactly which components were to blame, but there was something in the configuration that failed to respond appropriately to a known electrical problem. As a result, they breached the FRT requirement in the Grid Code.

You’re right to say that the report throws up more questions than it answers, and it would be helpful for the parties to be more transparent about what happened. Moving large amounts of electricity from these huge offshore windfarms to demand centres is a technically difficult challenge, but unless there is some openness about this, it will be hard for the challenges to be addressed efficiently, while policymakers and the public will think the integration of renewables is easy and problem free.

An interesting read, thanks. Who would want to be in charge of this, eh?

A question; if embedded generation is likely to ‘disconnect’ at every major grid fault,and compound the problems, do you think embedded generation needs its own capacity reserve pool? Is this an argument against the deployment of embedded generation that it heightens problems on the grid, or can we flip this logic around?

There’s a lot of work being done to make embedded generators less sensitive to grid disruptions, so they are less likely to trip off. It has been a long and slow process to get the various stakeholders to agree on the appropriate standards, and so this immunisation project is still ongoing.

I don’t think it’s particularly an argument against distributed generation – most of the time it works fine – but I think it’s an argument for doing 2 things:

(1) include embedded generation in the sizing of the transmission reserve requirement

(2) streamline industry codes to make them fit for purpose in a system that is increasingly de-centralised

I’m not so convinced that we are decentralising generation. True, “central” is the Dutch for power station. But we are increasingly relying on major grid connection points for interconnectors and offshore wind clusters making generation increasingly remote from demand, requiring extensive investment in grid capacity and creating high failure risks, including exposure to bad policy outside the UK. (Timera have an interesting post on the factors affecting gas and power markets currently, including French nuclear shutdowns, restricted Groningen production and the ECJ ruling on Nordstream)

https://timera-energy.com/intermarket-shock-roils-power-ttf-jkm/

Very nicely explained, thank you.

I do not understand why the UK is not investing in Synchronous Condensers, which have significant benefits over SVC and Statcoms – https://www.think-grid.org/synchronous-condensers-better-grid-stability.

I continue to read about the installation of new SVC and Statcoms instead of considering the adaptation of existing synchronous generators (a lot of which are completely idle – whether peaking or stand-by turbines or gensets) to synchronous condensers. This would increase the utilisation of existing assets, so it is a more environmentally friendly approach, and it is likely more economical than new SVC and Statcoms.

Would appreciate comments as it seems obvious to me but nothing at all has been done along these lines, so perhaps I must be missing something…?

I am now told by BEIS that we can expect the E3C report this week. Let’s hope they’re not busy redacting it.

The E3C interim report is finally out here:

https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/836626/20191003_E3C_Interim_Report_into_GB_Power_Disruption.pdf

It is rather disappointing in that it offers little that is new, and comments that work is still ongoing to identify causes and making recommendations on issues arising. They do draw particular attention to the fact that apparently when LFDD was triggered, some 600MW of embedded generation was tripped off, leaving just 350MW of effective demand reduction. There are many issues that they appear not to have tacked at all, or only barely scraped the surface.

One big benefit is we have an email address:

I think I shall be writing to them, highlighting the posts here

I agree – I’ve also just read it and was pretty underwhelmed. Hard to see what the point of the report is as there’s almost nothing new in it. Hopefully they will be more rigorous in the final report but while they mention compliance in passing, there’s little attention given to the fact that these generators were not meeting their Grid Code obligations and that is something that first needs to be understood and then addressed. I’d really like to see something detailed and technical from them, and not just fairly superficial commentary on communications protocols…

Now that the election is over, and there is a convenient time to bury bad news we have the final reports from OFGEM

https://www.ofgem.gov.uk/system/files/docs/2020/01/9_august_2019_power_outage_report.pdf

And E3C

https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/855767/e3c-gb-power-disruption-9-august-2019-final-report.pdf

The £4.5m fine for Hornsea is the value of about a day’s output at full capacity, and relatively much more damaging for Little Barford. A first trawl through finds that Oersted were distinctly cavalier in their approach to the whole incident at the time – restarting production before they understood the fault detail, and clearly knowingly stretching the system before they had installed the software that would have damped the oscillations that caused 2 banks to trip out. Add in that neither report mentions the lies they told publicly, and it seems that they have got off lightly.

There is a discrepancy in the amount of generation that is reckoned to have gone off line between the two reports. It’s quite plain that NG do not consider they have any responsibility towards covering for embedded generation trips (except perhaps for vector shift protected capacity), and neither they nor the distribution companies have the first clue about what actual generation levels at risk might be – nor how distribution networks behave.

From E3C

OFGEM have a similar, though not identical list. It seems that there is still a deal of ongoing business. So far, it seems the new Parliament hasn’t set up Select Committees, but this issue should plainly be close to the top of the new work for the BEIS Committee.

I came across this link, interest and some of relevance to this blog, https://eandt.theiet.org/content/articles/2020/06/renewables-let-s-address-reality/

It finishes up with “Being able to claim a record-breaking ‘coal-free’ period ahead of the next government white paper on energy will look good in front of the politicians. Ofgem has advised ESO of far more important issues requiring its urgent attention.”

— which is referring to the apparent focus of National Grid Electricity System Operator (ESO), or their director, Fintan Slye, on “renewables” rather than issues of grid failures.

Though one could argue that OFGEM , in their role as regulator, already should have been on top of the issue – and mde sure that the ESO were doing their job.

That’s interesting, thanks for the link. It’s ironic in light of this that NG has been in the news this week saying that by 2025 we can look forward to “gas-free days”! What they don’t mention is that in this utopia, balancing costs will be through the roof, and reliablility under question.

The other interesting factor, although this might be specific to the covid situation is around what’s happening in France – low demand is forcing EDF to take its nukes offline this summer to save fuel and because of staff shortages delaying annual maintenance and pushing some of it into Q4. Q4 French power prices are trending up and based on current spreads the UK can expect to export through the coming winter creating a 4 GW deficit against expected supply.

I don’t agree with the remark made in the article about a new regulator though – this idea gets thrown around every now and again, but in real life, any new regulator would get staffed by people from Ofgem, so it would really just be a re-branding exercise aka a waste of money.

It’s hard to come by experienced people about this subject, however, you sound like you know what you’re talking about! Thanks